The Bach Doodle Dataset is composed of 21.6 million harmonizations submitted from the Bach Doodle. The dataset contains both metadata about the composition (such as the country of origin and feedback), as well as a MIDI of the user-entered melody and a MIDI of the generated harmonization. The dataset contains about 6 years of user entered music.

Contents

License

The dataset is made available by Google LLC under a Creative Commons Attribution 4.0 International (CC BY 4.0) License.

Dataset

To make music composition more approachable, we designed the Bach Doodle where users can create their own melody and have it harmonized by a machine learning model in the style of Bach.

In three days, the web app received more than 50 million queries for harmonization around the world. Users could choose to rate their compositions and contribute them to a public dataset, which we are releasing here. We hope that the community finds this dataset useful for applications ranging from ethnomusicological studies, to music education, to improving machine learning models.

This user contributed dataset contains over 21.6 million miniature compositions. 78.8 years were spent composing, totaling 6 years of music. The compositions are split across 8.5 million sessions – each session represents an anonymous user’s interaction with the web app for a single page load, and may contain multiple data points. Each data point consists of the user’s input melody, the 4-voice harmonization returned by Coconet, as well as other metadata: the country of origin, the user’s rating, the composition’s key signature, how long it took to compose the melody, and the number of times the composition was listened to.

For more information about how the dataset was created and several applications of it, please see the paper where it was introduced: The Bach Doodle: Approachable music composition with machine learning at scale.

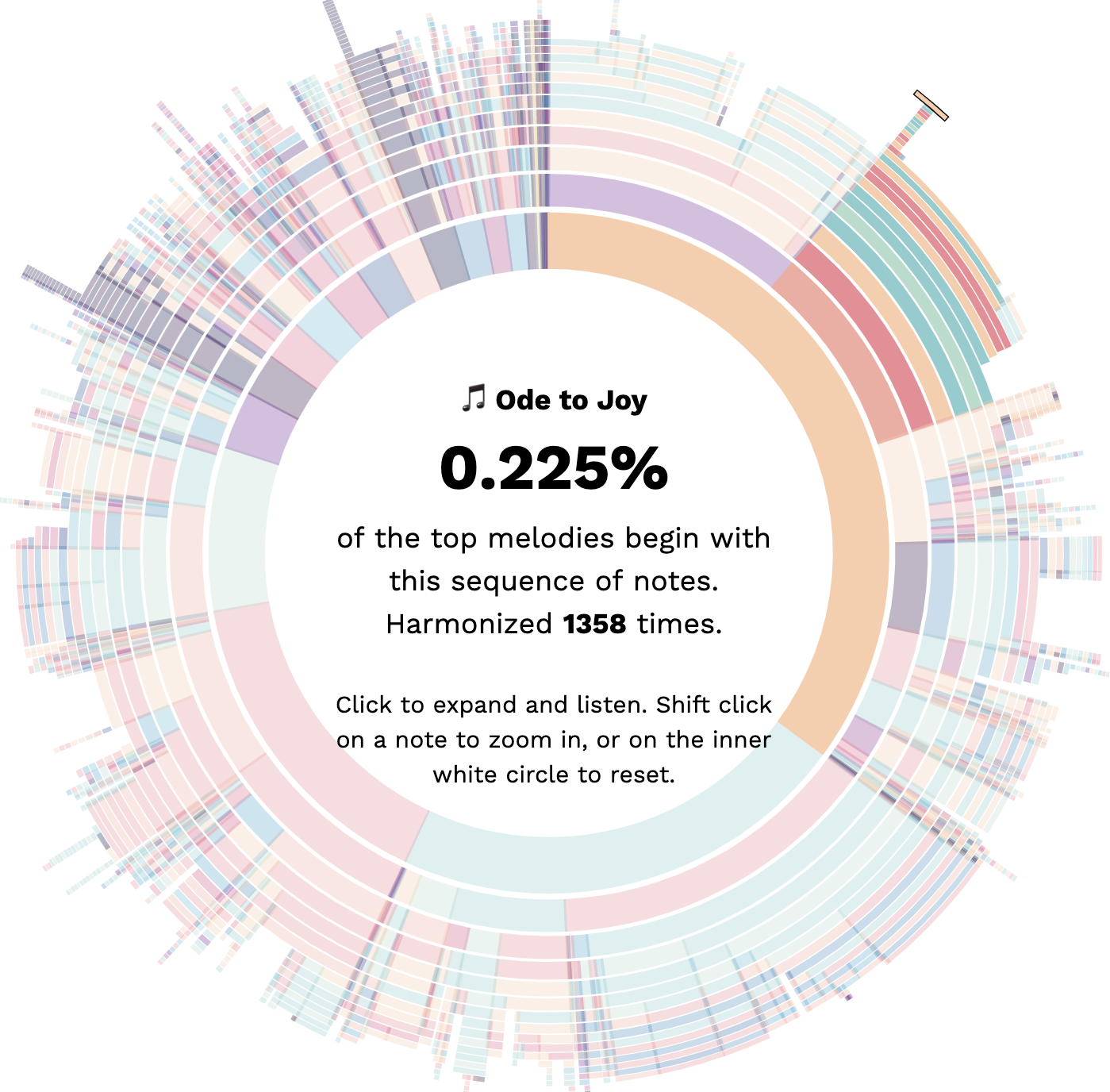

Visualization

We’ve built an in-depth exploration of the melodies in the dataset, such as the top overall repeated melodies, the top repeated melodies in each country, or the regional hits that surfaced:

Download

The Bach Doodle Dataset is available as a gzip file, either in jsonl or tfrecord format:

bach-doodle.tfrecord.tar.gz

Size: 6.1G

SHA256: 1d3ad35ce730cc66ba213ce8e46a140a49ea2644d5ffe29b508d6ceaf87e30cf

bach-doodle.jsonl.tar.gz

Size: 7.5G

SHA256: 86663279ac5686f692104fccf863bed36a92aaf7a4921cf39ed9c6de8401a02f

Sharded

The dataset is also available as a set of 192 tfrecord or jsonl shards:

- tfrecord:

https://storage.googleapis.com/magentadata/datasets/bach-doodle/bach-doodle.tfrecord-00???-of-00192.gz - jsonl:

https://storage.googleapis.com/magentadata/datasets/bach-doodle/bach-doodle.jsonl-00???-of-00192.gz

where ??? is a 3 digit number between 000-191.

Each data point has the following fields:

| Field | Description |

|---|---|

| session_id | A unique ID for this session. To avoid any personally identifiable information, a user (or a session) represents a new request to the doodle, not an IP – if the same physical person opened the doodle in multiple tabs, this would result in multiple sessions (or users), one for each tab. |

| input_sequence | The melody entered by the user, represented as a serialized NoteSequence. |

| output_sequence | The 4-voice harmonization returned by Coconet for the user melody, represented as a serialized NoteSequence. |

| backend | r or l, where r means the harmonization was sent to a cloud TPU unit, and l that it was done in the user’s browser using TensorFlow.js. |

| loops_listened | How many times this session listened to the composition (both the input melody or the output harmonization. |

| country | The location the doodle was accessed from, as a 2 letter country code. |

| composition_time | The length of time the doodle was open in this session, in ms. |

| feedback | If a rating is present, 0 is poor, 1 is neutral, 2 is good, or “” if a rating isn’t present. |

| key_sig | If the user changed the key in the advanced mode option, the key of the composition. |

How to Cite

If you use the Bach Doodle Dataset in your work, please cite the paper where it was introduced:

Cheng-Zhi Anna Huang, Curtis Hawthorne, Adam Roberts, Monica Dinculescu,

James Wexler, Leon Hong and Jacob Howcroft. "The Bach Doodle: Approachable

music composition with machine learning at scale."

International Society for Music Information Retrieval, 2019.

You can also use the following BibTeX entry:

@inproceedings{bachdoodle2019,

author = {Cheng-Zhi Anna Huang and Curtis Hawthorne and Adam Roberts

and Monica Dinculescu and James Wexler and Leon Hong and Jacob Howcroft},

title = {The {B}ach {D}oodle: Approachable music composition with machine learning at scale},

booktitle = {International Society for Music Information Retrieval (ISMIR)},

year = {2019},

url={https://goo.gl/magenta/bach-doodle-paper}

}