Editorial Note: In this guest post, blogger Dan Jeffries discusses how he and his team used Piano Transformer to compose Ambient music.

“AI isn’t just creating new kinds of art; it’s creating new kinds of artists.” - Douglas Eck, Magenta Project

Can AI make beautiful music?

In this article, my team and I dig deep to find out if neural nets can compose ambient music with the great masters of the art.

Ambient is a soft, flowing, ethereal genre that I’ve loved for decades. There are all kinds of ambient, from white noise, to tracks that mimic the murmur of soft summer rain in a sprawling forest. But I favor ambient that weaves together environmental sounds and dreamy, wavelike melodies into a single, lush tapestry.

“Cuando el Sol Grita la Mañana,” by Leandro Fresco, featured on the ‘Pop Ambient 2013’ album, exemplifies the genre for me. It’s almost instantaneously calming, filled with fluttering bells in the distance of a windy landscape of sound.

Can machine learning ever hope to craft something so seemingly simple yet intricate?

The answer is that it’s getting closer and closer with each passing year. It won’t be long before it’s commonplace to see artists co-composing with AI, using software that helps them weave their own soundscapes to delight audiences.

Here’s three of the best sample songs that we generated with our machine learning model:

In this article, we’ll look at how we did it. Along the way we’ll listen to some more samples we really loved. Of course, some samples came out great, while some didn’t work as well as we hoped, but overall the project worked beautifully. I’ll also give you the trained model to play around with yourself!

Lastly, I’ll also show you an end-to-end machine learning pipeline, with downloadable containers that you can string together with ease to train a music-making machine learning model of your very own.

So let’s dive in and make some beautiful music together.

Like any good machine learning story it all starts with the data.

Why I Picked Ambient

I didn’t choose ambient music randomly. I chose it for my team because of a deep love for the genre.

Over the last fifteen years, I’ve crafted a highly curated playlist of ambient music that you can follow on Spotify that I used as the base dataset to train our model.

The playlist has survived over time, as the way we listened to music changed around me. It started on CDs and downloaded mp3s and it moved to iTunes, before finding its current home on Spotify. I expand the list very, very slowly. Only songs that don’t disrupt the subtle streaming tapestry of the entire theme make the cut. Jarring and discordant notes get swiftly culled.

I listen to this playlist every day. Why? To help me write.

I’m listening to it right now as I write this article. Ambient music is utterly fantastic at helping you move into an alpha wave state as swiftly as possible. That’s the meditative, creative state of supreme concentration that comes when you get totally lost in an activity like meditation or dancing or writing. The playlist helps calm me down and focus me for the deep work of writing. After so many years, it acts almost like a Pavlovian trigger to shift me into that wonderful state of concentration that we call Flow.

Flow is when everything just “flows” and you get lost in the moment, totally absorbed in what you’re doing. Time seems to disappear. The past and the future are gone. Your mind drops away. You don’t have to think about the next step and the next, you just know what to do with effortless ease.

There are lots of different kinds of ambient music. Not all of them are great at inducing Flow. Some of it is more like dance music or Berlin techno. Some ambient is highly experimental, pushing the bounds of what a song is supposed to be with no melody at all, and some of it just mimics the natural world with looped recordings of rain or bells or blowing wind.

But to me, ambient music is ethereal and otherworldly, able to put the mind at ease quickly. There’s also a second, more subtle reason that I chose this kind of music. What the model generates doesn’t have to be completely perfect.

If one or two notes are out of place, they blend into the overall flow of the song. That’s a big difference from something like piano or drum music, which many machine learning music projects have focused on. If a drum beat is off or a note is out of place in a piano piece, it sticks out like a rusty nail from the whole.

In other words, the hazy softness of ambient music gave us a little more leeway to screw it up just a bit but still have something that sounds wonderful overall.

But now that I had my choice of music and my dataset I needed to figure out what algorithms would deliver on the promise of the playlist. To do that I surveyed the field, reading lots of articles and papers to find out what worked and what didn’t work.

Then I found the Magenta Project’s Music Transformer.

Transformers: Autobots, Roll Out!

Perhaps the most revolutionary architecture of the last few years is the Transformer, which surprised me with its versatility in Natural Language Processing. It’s behind mega-models like GPT-2 and GPT-3, which OpenAI just released as a commercial API product to build amazing new next-gen apps.

If you read my last article in the Learning AI If You Suck at Math series: The Magic of NLP, you’ll remember that I didn’t find NLP all that magical. That’s because the dominant method of dealing with time series and natural language data in 2017 was the LSTM, a recurrent neural network.

I didn’t find the LSTM all that good at dealing with long term memory because it’s not very good at it. LSTMs are recurrent neural nets (RNN). They push forward like snow plows, remembering a little bit about the few time steps behind them, but forgetting long term structure and knowing nothing about the steps ahead. Bidirectional LSTMs do exist and they give models a better sense of context in both directions but all RNNs tend to have a terrible recency bias and forget most deeper connections when they try to understand patterns.

I tried to generate good titles for my latest book and found NLP text generation an interesting toy that wasn’t all that much better than random title generators so I confidently declared my job as a writer safe from the AI revolution.

Then came the Transformer architecture just a few months later.

Researchers at Google delivered the Transformer in the landmark paper called Attention is All You Need and a blog post breaking it all down for us. The novel architecture was designed to solve neural machine translation tasks for Google Translate. It delivered a network that was highly parallelizable and much easier to train.

There are a couple of standard ways to do time series prediction with neural networks. We already talked about RNNs like LSTM which use recurrence to encode information from past events. Convolutions are another common approach. For images this usually involves learning patch-like structure from 2d convolutions of varying size over the image. For 1d time series like audio or language, this could involve “dilated” convolution that learns at multiple timescales. The creators of WaveNet successfully used dilated convolutions in their work, which made synthetic speech sound much more natural. Transformers ditch both recurrence and convolution, replacing them with a flexible learned attentional mechanism.

“Attention” gives neural networks a much deeper long term memory. What the heck is “attention” and how does that help us generate cool music?

As Chris Nicholson writes in his excellent introduction to attention:

“Attention takes two sentences, turns them into a matrix where the words of one sentence form the columns, and the words of another sentence form the rows, and then it makes matches, identifying relevant context.”

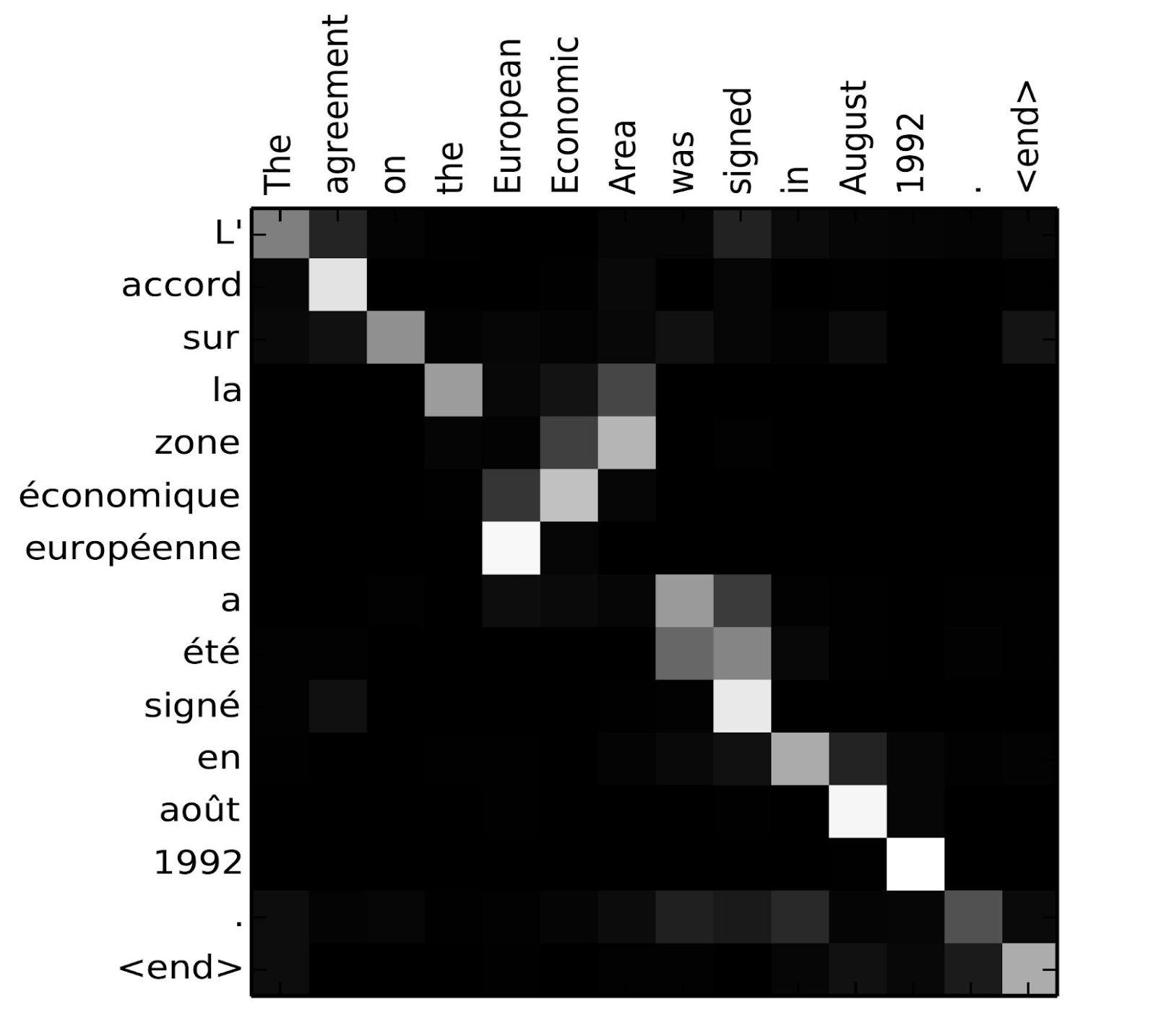

Check out the graphic from the “Attention is All You Need” paper below. It’s two sentences, in different languages (French and English), translated by a professional human translator. The attention mechanism can generate a heat map, showing what French words the model focused on to generate the translated English words in the output.

(Source: Attention is All You Need)

But the amazing thing about attention mechanisms is that you don’t need to lay out two different sentences. You can lay out the same sentence, which we call “self attention.” With self-attention we can turn transformers back on themselves to learn about the important words in the same sentence, rather than the difference between two translations of a sentence.

Jay Alammar explains self attention beautifully in his post on Transformers and he gives us an excellent visual to understand how relating things in the sentence make a big difference in understanding that sentence.

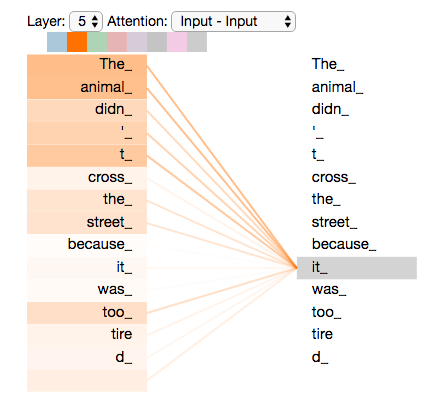

In a Transformer, each word is encoded with information about the relevance and relation of every other word in the sentence in a key value pair that the model can query. Take the graphic below, which focuses on just one word, “it,” and how it relates to every other word. Does “it” refer to “the animal” or “the road”? Humans have little trouble figuring that out but natural language models struggled with it for decades. Here we can see that the darkest orange colors on the heat map surround “the animal” because those words have the highest probability according to the model of referring to “it.”

(Source: Jay Alammar’s The Illustrated Transformer)

The math a model uses to decide relevance and importance is complex and there are a number of ways to do it, covered in multiple papers that detail attention mechanisms. But all you need to know is that each word gets encoded with how important it is to every other word. That means Transformers don’t just know about the few words that came before it, like RNNs, while knowing nothing about the words to come. Transformers knows about every word in the sentence, forwards and backwards. That means they excel at both small and larger clusters of information and how they relate to each other.

And that’s the real power of the Transformer. It’s much better at developing a long term memory about what it’s learned.

Since one of the biggest problems in music is long term structure, the good folks at the Magenta project realized attention mechanisms might work well on music if they made a few modifications. Specifically, they used “relative attention” to create the Music Transformer, which they explain beautifully on their blog:

“While the original Transformer allows us to capture self-reference through attention, it relies on absolute timing signals and thus has a hard time keeping track of regularity that is based on relative distances, event orderings, and periodicity. We found that by using relative attention, which explicitly modulates attention based on how far apart two tokens are, the model is able to focus more on relational features. Relative self-attention also allows the model to generalize beyond the length of the training examples, which is not possible with the original Transformer model.”

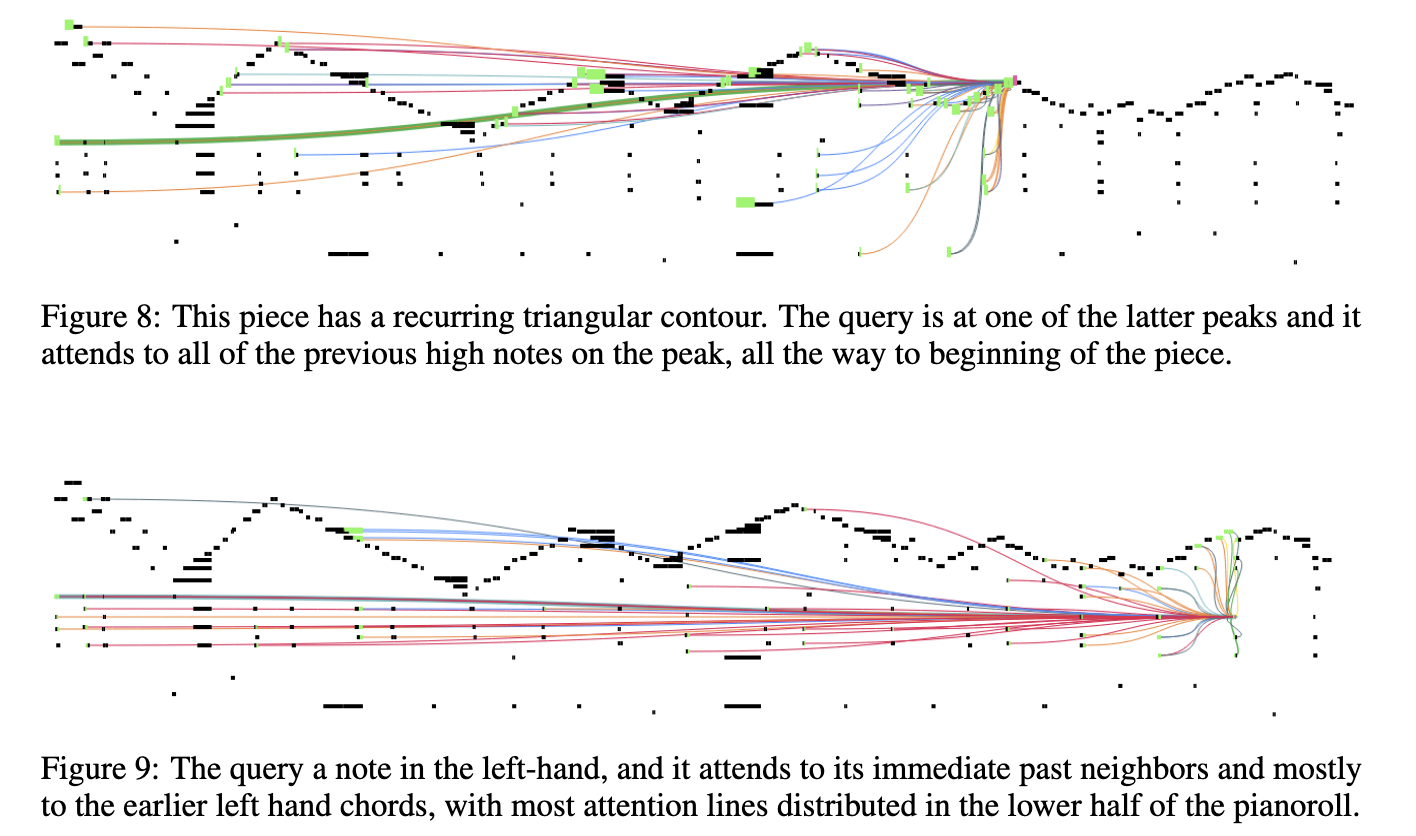

By modeling the relationships of notes, Music Transformer does much better at capturing the long term coherence of a single song and how the notes all relate to each other. The Magenta team visualizes how some of these notes connect to each other in the paper on their algorithm for relative self attention. Just like the word “it” you can see how one note is connected by lines to many other notes in the song. Each note is encoded with information about its relevance to other notes.

The more I read on Music Transformer, the more I realized I had one of the most cutting edge approaches to making beautiful music.

A Herd of Elephants and Machine Learning

We used Pachyderm to build our machine learning pipeline.

Pachyderm makes it supremely simple to link together a bunch of loosely coupled frameworks into a smoothly scaling AI generating machine. The platform can track data lineage, do data/code/model version control and easily stack lots of experimental and cutting edge packages together like beads on a string. If you can package up your program in a Docker container you can easily run it in Pachyderm.

I created a complete walkthrough that you can check out right here on GitHub. It allows you to recreate the entire pipeline and train the model yourself.

I’ve also included several fully trained models you can download here and try out yourself. There are two Ambient music models and two Berlin Techno models.

Lastly, I included a Docker container with the fully trained Ambient model and seed files so you can start making music fast.

It’s time to make some music!

Creating Beautiful Music Together

If you don’t want to recreate the entire pipeline, then I’ve made it super easy for you to play with our trained model and generate your own music.

Make sure you have Docker Desktop installed and running, then pull down the Ambient Music Transformer container to get started.

I walk you through every step, from pulling the container down, to running it and generating MIDI files of brand new ambient music in the section of the GitHub tutorial called “Generating Songs.”

In just a few minutes you’ll be able to generate your own songs with ease.

Playing Your Song

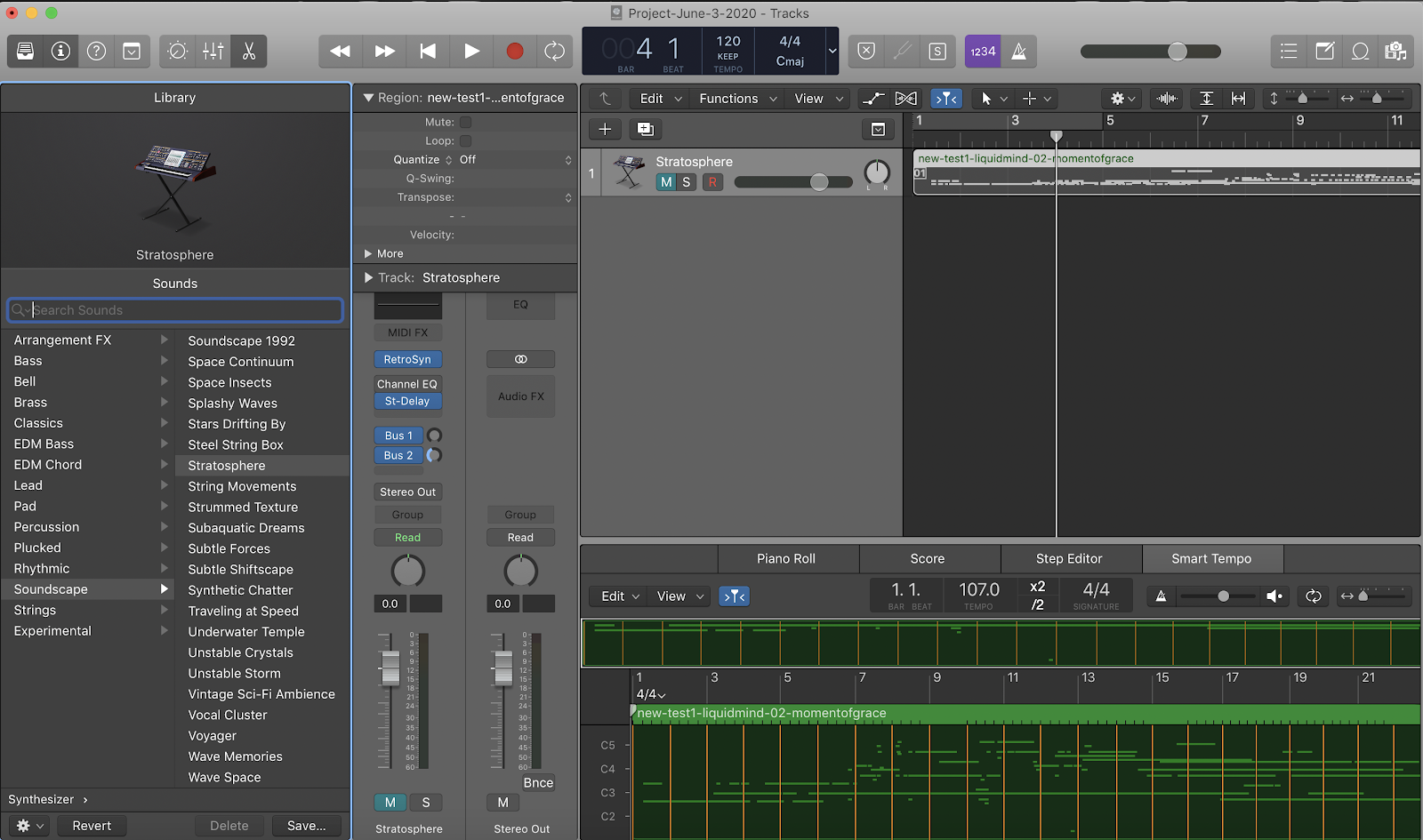

Once you’ve generated a few songs, there’s one last step. That’s where we bring in our human-in-the-loop creativity.

We play our MIDI through various software instruments to see how it sounds. The software instruments are what bring our song to life.

Different instruments create very different songs. If you play your MIDI through a drum machine or a piano it will sound like random garbage because ambient music is more irregular than a drum or piano concert.

But pick the right ambient instrument and you might just have musical magic at your fingertips.

Of course, you could automate this step but you’ll need to find a rich collection of open source software instruments. They’re out there, but if you have a Mac you already have a rich collection of software instruments in Apple’s Logic Pro. That felt like the best place to start so I could try lots of iterations fast. If you don’t own Logic Pro you can install a 60 day trial version from the Mac Store that is fully featured and not crippleware.

If you don’t want to use Logic Pro, there’s lots of amazing music creation software to choose from, like Abelton Live and Cuebase. You can also use Garageband, which is free. If you’re a musical magician then go wild and unleash your favorite software collection on those AI generated songs.

But if you’re using Logic Pro like me, then you just import the MIDI and change out the instrument in the software track.

After days of experimentation I found a few amazing software instruments that consistently delivered fantastic songs when the Music Transformer managed to spit out a great sample.

-

Stratosphere

-

Deterioration

-

Peaceful Meadow

-

Calm and Storm

Here are some more of my favorite samples:

Several software instruments give the very same MIDI a strong, sci-fi vibe that feels otherworldly, as if I was flying through space or dropped down into a 1980s sci-fi synthwave blockbuster:

-

Underwater Temple

-

Parallel Universe

Here are a few fun sci-fi samples:

Music Transformer does a terrific job depending on what you feed it. Some seeds really make the Music Transformer sing.

But not all seeds are created equal. It really struggles sometimes.

No software instrument can save a terrible output no matter how hard you try.

Where We Fell Short

The model doesn’t always perform well. Sometimes it generates weird samples that don’t sound great no matter what software instruments we run them through.

The model seems to have brain damage when it comes to Subtractive Lad or Brian Eno tracks, often starting a song with a minute of silence and following that with one or two nearly endless notes.

Here’s a bizarre, nine minute song that got generated from Leandro Fresco with bits of total silence and the same long, mournful notes throughout.

Here’s another sample that just sounds like 18 seconds of oscillating fans:

We strongly suspect that if we had a big corpus of MIDI files that came directly from the musical artists themselves we’d have an even stronger model. That would skip the imperfect transcription step and deliver something much closer to their original visions.

If we had also tried fine tuning the original transcription model away from just piano, we could probably get better ambient transcriptions, leading to better generative models.

But overall we love the results that Music Transformer delivered.

Considering how many of the decisions of this project and approach were based on the very human intelligence traits of gut instinct and intuition, it worked out much better than expected.

It could have all gone horribly wrong.

The internet is littered with AI music creation gone wrong.

Where That Leaves Us and Where It’s All Going

Music and AI may just share a brilliant future together. We will likely see more artists co-composing with their AIs. We might see 3D animators creating virtual boy bands and engineers staging holographic concerts. We could even see AI and DJs creating music on the fly, based on moods and the wild gyrations of the late night dance crowd.

As for the AI models and algorithms, they’ll only continue to get better.

I loved doing this project. It thrilled me to wait for the Transformer to pop out a new song. I sometimes spun up multiple containers so it could crank out many at the same time. I watched and waited, wondering if this one would surprise and delight me as I popped it into Logic Pro.

Sometimes it even did surprise me.

I found myself fascinated by certain songs the model created, playing them again and again with different software instruments, trying to tease out the best in them.

Of course, sometimes they were duds too but that’s all right. It was easy to move on to a new one.

I often found myself editing away while waiting for another song to generate. Eventually, I fell into a rhythm with the process, creating a kind of virtuous loop with human and machine working together. It’s likely future artists will do the same and more, co-creating with the algorithms in ways I can’t yet imagine.

Artists have always used the latest tools and technology to advance the arts. Art itself is infinitely malleable and adaptable to the artists of the age.

AI stands to give them brand new tools to create in brand new ways.

I can’t wait to see what tomorrow’s master musicians create with their intelligent machines.

The Team Behind the Ambient Music Project

Kevin Scott

Kevin Scott (@thekevinscott) is an engineer whose attention to design led him to build uniquely human-centered products for Venmo, GE Healthcare, and ngrok among other leading technology ventures. His book, Deep Learning in Javascript explores the potential of bringing Neural Nets to the Web, a topic further explored in “AI & Design,” a series of talks aimed at bridging the gap between designers and AI technologists. He can be found writing on the web at thekevinscott.com.

Daniel Jeffries

Dan Jeffries is Chief Technology Evangelist at Pachyderm. He’s also an author, engineer, futurist, pro blogger and he’s given talks all over the world on AI and cryptographic platforms. He’s spent more than two decades in IT as a consultant and at open source pioneer Red Hat.

With more than 50K followers on Medium, his articles have held the number one writer’s spot on Medium for Artificial Intelligence, Bitcoin, Cryptocurrency and Economics more than 25 times. His breakout AI tutorial series “Learning AI If You Suck at Math” along with his explosive pieces on cryptocurrency, “Why Everyone Missed the Most Important Invention of the Last 500 Years” and “Why Everyone Missed the Most Mind-Blowing Feature of Cryptocurrency,” are shared hundreds of times daily all over social media and been read by more than 5 million people worldwide.

You can check out some recent talks at the Red Hat Open Shift Commons and for the 2b Ahead Think Tank in Berlin.