The LA-based dance-pop trio YACHT just released their new album, a collaboration with Magenta and other ML researchers and artists! We hope to be able to feature a more detailed post in the future from the band, but for now I wanted to share a bit of background and some of my thoughts on the work.

I highly recommend you listen to the full thing but go ahead and spin one of the singles as you read the rest of the post:

Every song on the album was composed using Magenta’s MusicVAE model, all lyrics were generated by an LSTM trained by Ross Goodwin, and parts were performed on Magenta and Creative Lab’s NSynth Super instrument. Visual aspects of the album were also made using generative neural networks, including the album cover by Tom White’s adversarial perception engines and GAN-generated promotional images by Mario Klingemann. The videos include sequences generated with pix2pix and fonts from SVG-VAE using implementations that are available in Magenta’s GitHub repo.

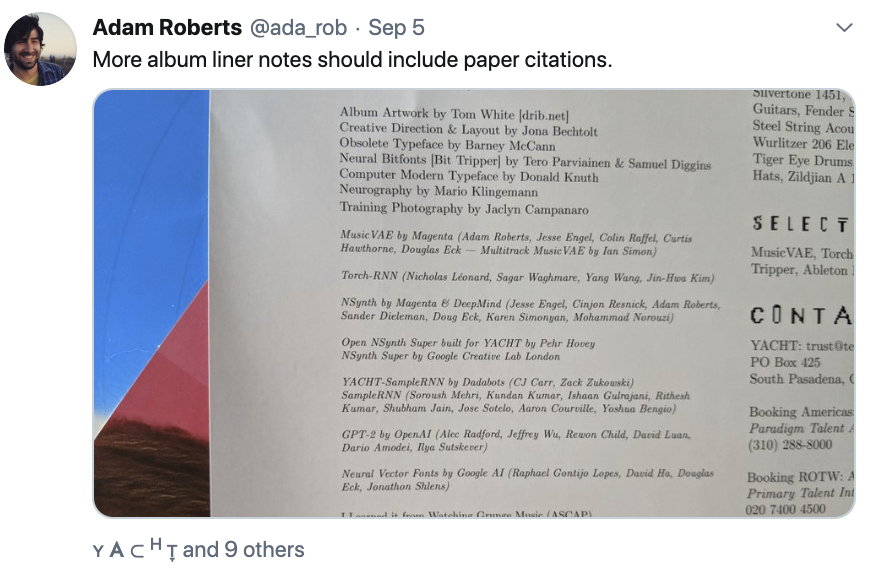

For a more exhaustive explanation of the processes and models involved, check out the excellent Ars Technica article and watch Claire Evans’s presentation at Google I/O. Or you can read the album’s liner notes:

Even though the genre is not typically my go-to, I am really in love with this album. You can feel the musicians struggling to find their voice within the “uncanny valley” of MusicVAE, resulting in a compelling and unique piece of art. YACHT took advantage of the VAE’s latent space and encoder by “conditioning” on their earlier compositions via various latent space manipulations (e.g., interpolation between their old compositions). They didn’t just take the outputs of the models strictly as-is, but allowed themselves a few alterations (selecting individual tracks from multi-instrument output and recombining them with others, transposing to match keys when combining different outputs, and adding their performance and production to the MIDI output). NSynth adds an additional layer of “weirdness” so you never feel fully sure of what you’re actually hearing. The most important part is that the album is fun to listen even if you’re not familiar with all of the technology behind it, and it sounds like YACHT.

This collaboration with YACHT has been a long one for Magenta, starting all of the way back in 2016. Since the beginning, much of our research has been driven by the desire to provide musicians and artists with more control over the generation capabilities of deep learning technology. We have always believed in the potential of using AIA (artificial intelligence augmentation) as a means to create an entirely new type of music, and we’re delighted to see it happening!

Thanks to YACHT–and everyone else experimenting with these techniques in your work–for making the future just a little bit cooler.

If you enjoy the album, please make sure you support all of the artists involved!