Web apps built with Magenta.js

This section includes browser-based applications, many of which are implemented with TensorFlow.js for WebGL-accelerated inference.

An app to make it easier to explore and curate samples from a piano transformer.

Play real-time music with a machine learning drummer that drums based on your melody.

MidiMe is a machine learning experiment to train a small model to sound like you. All the training happens directly in the browser using TensorFlow.js – no servers or backends here!

Magenta Studio is a collection of music plugins for Ableton Live built on Magenta’s open source tools and models. It can also be downloaded as standalone, native apps with no additional dependencies.

RUNN = 🏃Run + 🤖RNN. A side-scrolling game where the player has to finish the level to listen to the full song. Each level is generated realtime with a MusicRNN model.

A web-based intelligent music application built on MelodyRNN and DrumsRNN, powered by Magenta.js.

A web-based game based on interpolations of melodies with MusicVAE. Listen to the music to find out the right order, or “sort” the song.

Every time you start drawing a doodle, Sketch RNN tries to finish it and match the category you’ve selected.

Have some fun pretending you’re a piano virtuoso using machine learning.

Converts raw audio to MIDI using Onsets and Frames, a neural network trained for polyphonic piano transcription.

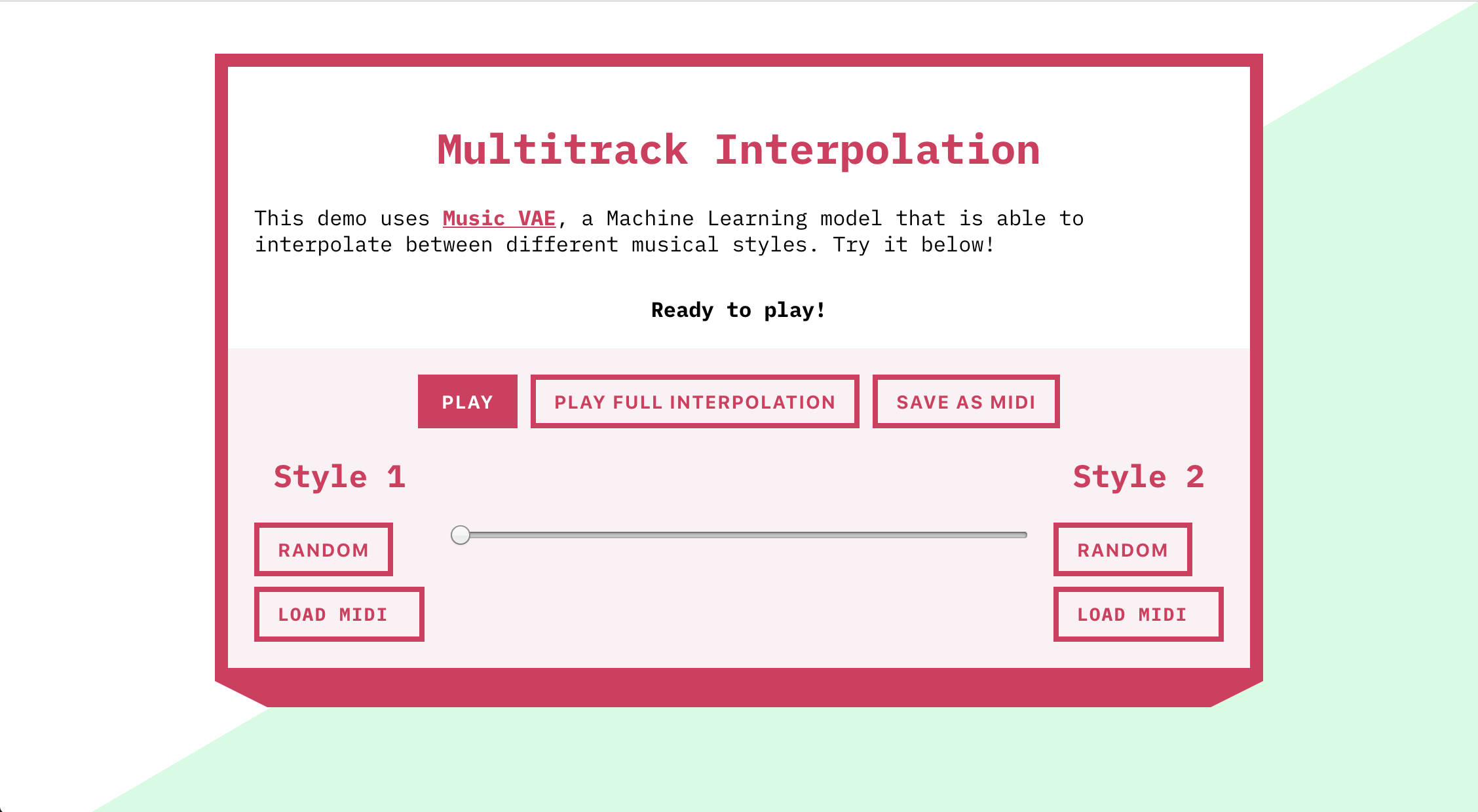

Uses the Magenta.js API with a MultiTrack MusicVAE model to interpolate between two musical “styles”, either randomly generated or imported from MIDI.

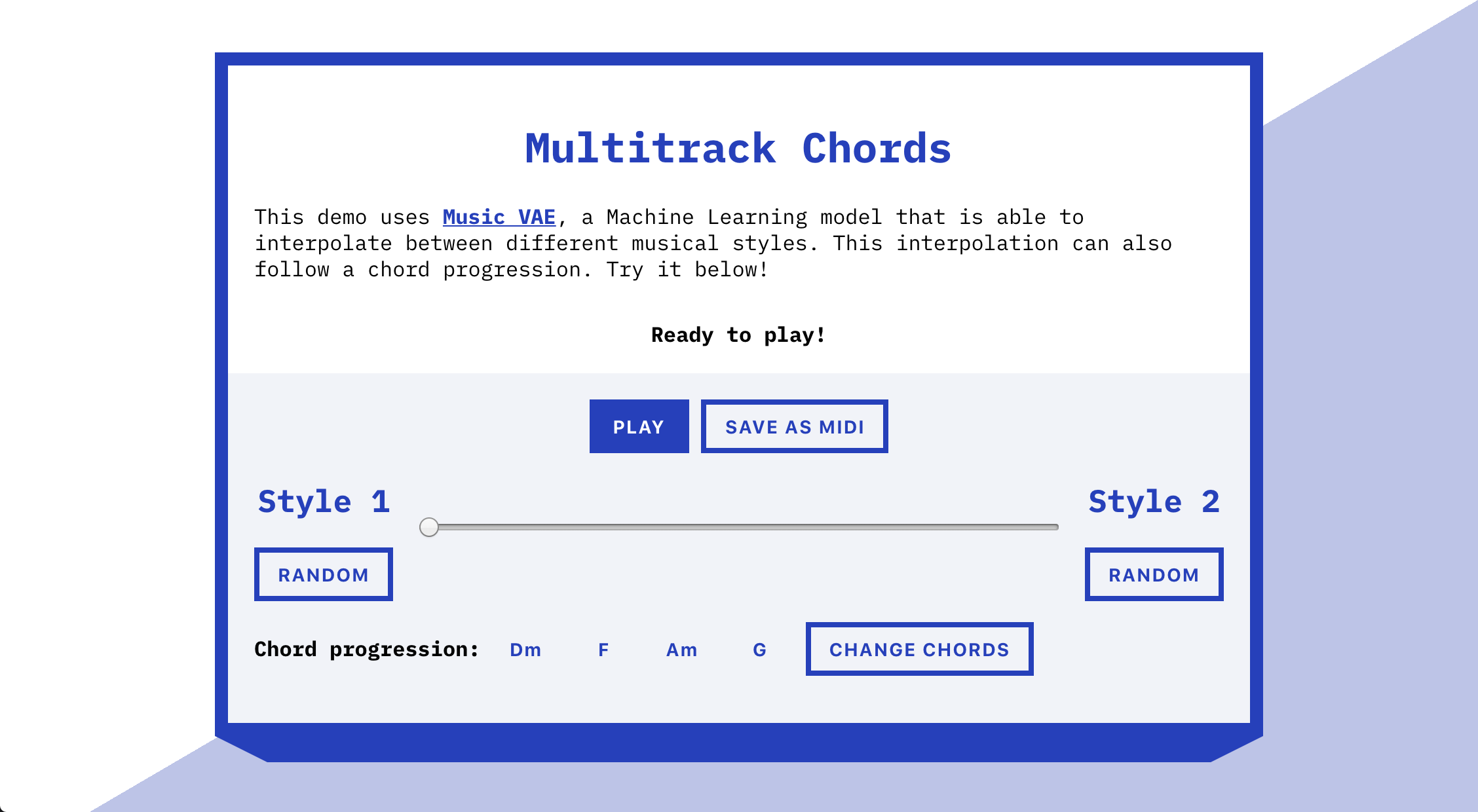

Uses the Magenta.js API with a Multitrack MusicVAE model to interpolate between two musical “styles” while playing an editable chord progression.

A demonstration of how simple it is to randomly generate a never-ending stream of trios from MusicVAE with Magenta.js.

Generate two dimensional palettes of drum beats and draw paths through the latent space to create evolving beats. Built by Google Creative Lab using MusicVAE.

Sketch melodies on a matrix tuned to different scales, explore a palette of generated melodic loops, and sequence longer compositions using them. Built by Google’s Pie Shop using MusicVAE.

An interactive demo by Google Creative Lab based on MusicVAE using the MusicVAE.js API. It allows you to easily generate interpolations between short (2 bar) melody loops.

Real-time PerformanceRNN piano performances in the browser implemented with TensorFlow.js.

Start drawing, and the neural network will complete your sketch, or start from wherever you left off. One of several interactive web demos that let you draw together with SketchRNN.

An interactive AI Experiment based on NSynth made in collaboration with Google Creative Lab that lets you interpolate between pairs of instruments to create new sounds.

An interactive AI Experiment based on MelodyRNN made in collaboration with Google Creative Lab lets you make music through machine learning. A neural network was trained on many MIDI examples and it learned about musical concepts, building a map of notes and timings. You just play a few notes, and see how the neural net responds.